Будущее

за

имплантированными мозговыми чипами

Рэй

Курцвейл,

визионер в области искусственного интеллекта, который более 20 лет

назад

относительно точно предсказал нынешнюю хронологию развития

искусственного

интеллекта, недавно в своей книге «Сингулярность ближе» еще раз ясно

дал

понять, что развитие не остановится на искусственном интеллекте.

Будущее за имплантированными мозговыми чипами, так что через несколько

лет мы

сольемся с машинами; знания будут скачиваться, а не изучаться.

Neuralink — предвестник грядущих событий, так сказать, пионер. И он

ясно

показывает, что работает.

Brain Computer Interfaces (BCI), Explained

Written

by Brooke Becher

Image: Shutterstock

Brain-computer

interfaces (BCI) are devices that create a direct communication pathway

between

a brain’s electrical activity and an external output. Their sensors

capture

electrophysiological signals transmitted between the brain’s neurons

and relay

that information to an external source, like a computer or a robotic

limb,

which essentially lets a person turn their thoughts into actions.

These

brain chips

go over the scalp in a wearable device, get surgically placed under the

scalp or

even get implanted within brain tissue. The idea is that, the closer

the chip

is to the brain’s neural network, the more clear, or “high definition,”

a

signal can be interpreted.

Brain-Computer

Interface Definition

A brain-computer interface (BCI) is a

device that

lets the human brain communicate with and control external software or

hardware, like a computer or robotic limb.

Perhaps the most

popular example of a brain-computer interface is Neuralink,

a chip that was implanted into a quadriplegic patient’s brain in 2024

and

allows him to control a computer.

What Is a

Brain-Computer Interface?

Brain-computer

interfaces are devices that process brain activity and send signals to

external

software, allowing a user to control devices with their thoughts.

With

BCI

technology, scientists envision a day when patients with paralysis,

muscle

atrophy and other conditions could regain motor functions.

Rehabilitation

services could also adopt BCIs to accelerate recovery from injuries.

Ramses

Alcaide, CEO

of neurotech startup Neurable,

which develops non-invasive brain-computer interfaces in the form of

headphones, sees potential for BCI-enhanced devices to become an

everyday item

for the average person.

“If

we can make

brain-computer interfaces accessible and seamless enough then they can

be

integrated into our daily lives, just as we use smartphones or laptops

today,”

Alcaide told Built In. “But in order to truly become a ubiquitous tool,

they

need to be comfortable, intuitive and reliable enough that people can

use them

without consciously thinking about them — similar to how we use a mouse

or

keyboard to interact with a computer.”

Excitement

around

the possibilities of BCI has resulted in a thriving market, which is expected to triple in

size from

$2 billion in 2023 to $6.2 billion by the end of the decade.

How Do Brain-Computer

Interfaces Work?

Brain-computer

interfaces are modeled after the electrophysiology of a brain’s neural network. When we make a decision —

or even

think about making a decision — electrical chemical signals spark. This

phenomenon is located in our nervous system; more specifically, in the

gaps

between neurons, known as synapses, as they communicate back and forth.

In

order to capture

this brain activity, BCIs place electrodes proximal to these

conversations.

These sensors detect

voltages, measuring the frequency and intensity of each “spike” as they

fire or

potentially fire.

“It’s

like a

microphone; but in this case, we’re listening to electrical activity

instead of

sound,” said Craig Mermel, president and chief product officer at Precision

Neuroscience, a

startup developing a semi-invasive, reversible neural implant. “We’re

picking

up the electrical chatter of the brain’s neurons communicating with

each

other.”

That

information is

then fed through local computer software, where it’s translated in a

process

known as neural decoding. This is where a variety of machine

learning algorithms

and other artificial intelligence agents take over,

converting

complex data sets collected from brain activity into a programmable

understanding of what the brain’s intention might be.

Invasive vs.

Non-Invasive BCIs

Brain-computer

interfaces come in two main types: invasive and non-invasive.

- Invasive BCIs: BCIs directly connected to a

patient’s brain tissue and are implemented through surgical procedures.

Because there are major risks that come with surgery, invasive BCIs are

more appropriate for patients looking to recover from severe conditions

like paralysis, injuries and neuromuscular disorders.

- Non-Invasive BCIs: BCIs that involve wearing a device with electrical sensors that serve

as two-way communication channels between a patient’s brain and a

machine. They produce weaker signals since they’re not directly

connected to brain tissue. As a result, non-invasive BCIs would be

better suited for purposes like virtual gaming, augmented reality and guiding the actions of robots

and other technologies.

Applications of

Brain-Computer

Interfaces

“The

near-term goal

[of brain-computer interfaces] is to give the abilities back to those

who have

lost them,” said Sumner Norman, a scientist at nonprofit startup Convergent

Research and

former chief brain-computer interface scientist at software firm AE Studio. “But in the

long term,

this tech is also intended to create a kind of tertiary cortex, or

another

level of the human brain function and an executive function that would

allow us

to be almost superhuman.”

These

are some of

the more common use cases of brain-computer interfaces:

Robotic Limbs and

Wheelchairs

By

supplying a

real-time neural feedback loop that rewires the brain, BCIs are capable

of

restoring movement, mobility and autonomy for paralyzed and disabled

patients,

heightening their quality of life. In more chronic cases, robotic devices and

limbs are integrated.

Wireless Headsets

Headsets

are a way

to deliver a non-invasive approach to brain-computer interfaces.

Some boost productivity and enhance focus, as seen with

Neurable’s Enten, while others restore motor functions to an individual’s

upper

extremities following a stroke, such as the IpsiHand system by

Neurolutions

Inc.

Spellers

Non-verbal

individuals, who may be stuck in a “locked in” state following a stroke

or

severe injury, can use eye movement for computer-augmented communication.

Smartphone and

Smart-Home Device

Interface

In several studies,

users have exercised control of social networking apps, email

administration,

virtual assistants and instant message services sans motor skills.

Dimming the

lights or changing the channel on a TV are examples of how BCIs can be

adapted

in the home.

Drones

The

Department of

Defense has funded research

to develop hands-free drones for

military use. This would allow soldiers to telepathically

control swarms of unmanned aerial vehicles. Examples of

Brain-Computer Interfaces

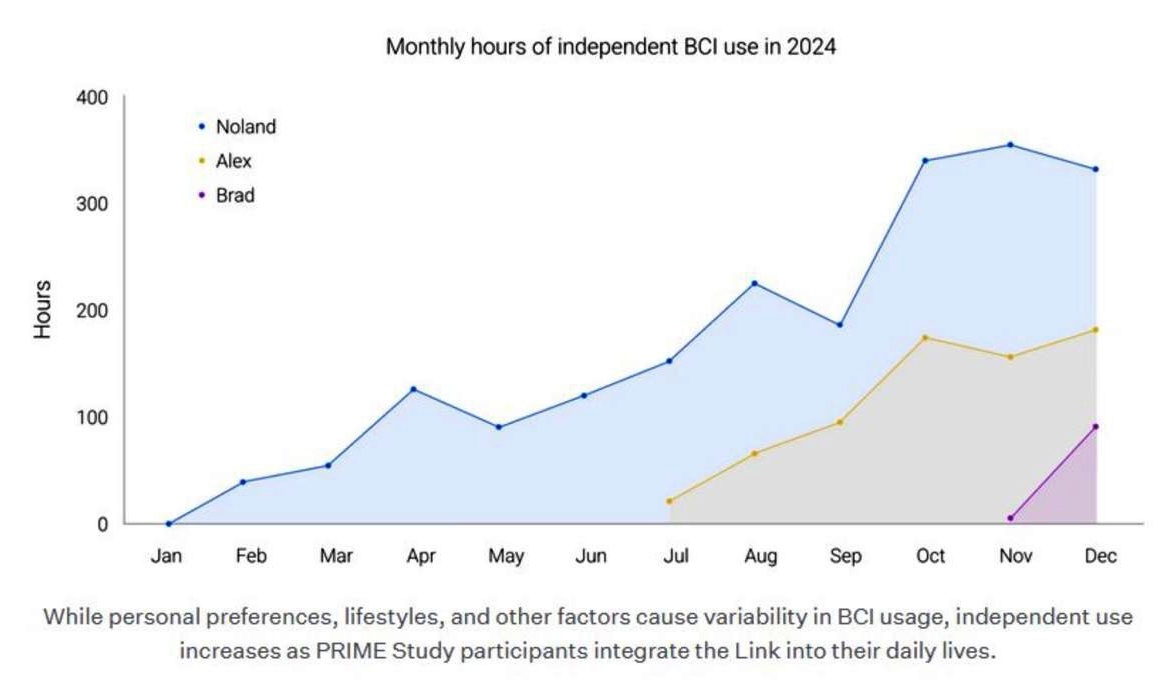

Neuralink’s Coin-Sized

Brainchip

Neuralink, headed by

Elon

Musk, has developed a coin-sized surgical implant. In order to monitor

brain

activity as closely as possible, Neuralink’s device, the Link, uses

micron-scale wires of electrodes that fan out into the brain. Its

primary focus

is to treat paralysis. The company has inserted its implant in one

patient

and has plans to take on a second

patient.

Neurable’s BCI-Enhanced

Headphones

Neurable is

building

headphones that interpret brain signals to level up productivity. Its

first

pair, Enten, uses advanced data

analysis and signal processing techniques to maximize a users’

peak

focus periods throughout the day. The company’s MW75 Neuro builds

on the Enten, offering the same BCI capabilities coupled with more

secure data

encryption and a mobile app that makes it easier to analyze

data-driven insights.

Precision

Neuroscience’s

Electrode-Packed Film

Precision

Neuroscience is

approaching brain-computer interface systems with a surgically

implanted brain

chip that’s minimally invasive and fully reversible. The Layer 7

Cortical

Interface is a thin film of micro-electrodes, about as thick as

one-fifth of a

human hair, that conforms to the brain’s cortex just under the

skull

without damaging any tissue. In June 2023, Precision conducted an in-human clinical study of its

neural

implant. It has since expanded its research to include studies at

Penn

Medicine and Mount Sinai Health System.

Synchron’s Endovascular

Alloy Chip

Synchron is

mapping the

brain via blood vessels. Inserted through the jugular vein, the Stentrode is

a neuroprosthesis placed in the superior sagittal sinus near the motor

cortex.

The eight-millimeter flexible alloy chip transmits neurological signals

to a

receiver unit implanted into the patient’s chest, which then translates

thoughts into clicks and keystrokes on a computer or mobile device in

real

time. Synchron is primed to start clinical trials and has teamed up with OpenAI to help paralyzed

patients

respond to text and chat messages.

Blackrock Neurotech’s

Mesh Lace

Blackrock

Neurotech has

been testing its devices in humans since 2004 in its two decades of

brain-computer interface development. Blackrock’s product

portfolio has helped patients regain tactile function,

movement of

their own limbs and prosthetics as well as the ability to control

digital

devices solely from thought. Its latest project, Neuralace, is a flexible, hexagonal mesh patch

designed to

conform to the fissures and sulci of the brain. Its large surface area

can

capture 10,000 neural channels, inching closer to whole-brain data

capture.

Inbrain

Nanoelectronics’ Graphene Chip

Inbrain

Neuroelectronics aims to restore mobility in patients with

disabilities. The company has designed a graphene chip implant, which not only tracks brain

activity

but also stimulates it. In addition, it sends much more powerful

signals

compared to the metallic chips typically used in BCIs. While the graphene

chip will

be tested on a patient at the University of Manchester as part of a

brain tumor

surgery, it could later be applied to patients with Parkinson’s disease.

Benefits of

Brain-Computer Interfaces

Restore Mobility and

Motor Functions

Following

a course

of neurological rehabilitation, brain chips and wearables can give

patients

direct control over exoskeletons and

robotic limbs. This is made possible

by reading signals directly from the brain, bypassing the site of

injury or

disease — such as a severed spinal cord — muscular activity

altogether.

‘Mindwriting’ for

Non-Verbal

Individuals

With

brain-computer

interfaces, if you can think it, you can speak it. It’s just a matter

of how

fast neural decoding software can catch up.

A

team from

Stanford University found that its brain chip could hack 62 words

per minute,

which is on pace with natural conversation. The study featured a

non-verbal

patient who suffered amyotrophic lateral sclerosis and a pre-programmed

vocabulary of 125,000 words, marking “a feasible path forward for using

intracortical speech brain-computer interfaces to restore rapid

communication

to people with paralysis who can no longer speak.”

Treat Neurological

Conditions

One

study noted that

individuals with ALS, cerebral palsy, brainstem stroke, spinal cord

injuries,

muscular dystrophies or chronic peripheral neuropathies may benefit

from BCIs.

Neural implants may be able to treat conditions or at least improve the

quality

of life for patients with chronic or terminal diagnoses.

Monitor Mental Health

Brain-computer

interfaces may one day be able to ease psychiatric conditions, like

bipolar

disorder, obsessive-compulsive disorder, depression and anxiety. Using neurofeedback

techniques, they can also help prevent more pedestrian conditions

like burnout

and fatigue by delivering targeted electrical stimulation to

specific

areas of the brain.

“It

may not look as

flashy, because someone in the demo just looks a little bit happier,”

said

Norman, whose research at the California Institute of Technology as a

postdoctoral

fellow focused on this next generation of brain-computer interface

tech. “But

if you were offered a solution where I said, using a single device, I

can treat

any form of anxiety that you have, and also offer you sleep on demand

and one

hundred other applications that could make your life a little better

than it

was, I do think that quite a few people would adopt that

technology.”

Cognitive Enhancement

Users

can train

their brains — memory, executive function and processing speed — to the

biofeedback they receive from a neural implant in real time. Similar

to wearable tech and apps available today, users

would be

able to monitor their stats and self-regulate accordingly.

Understanding the Brain

While

much of the

brain remains a mystery, BCIs are creating a direct

channel to

our thoughts — complete with a process to decode its language.

Challenges of Brain-Computer

Interfaces

Brain-computer

interfaces have more than a half-century’s worth of research and

several proof

of concepts that passed human trials. So what’s the holdup? The two

largest

hurdles keeping BCIs from widespread adoption have to do with

regulatory

approval and funding.

Regulatory Approval

Given

that

brain-computer interfaces are registered as a sort of medical

device, they lie under the jurisdiction of the FDA. Regardless of

the

product, the institution’s primary concern is patient safety.

The

challenge is in

the fact that BCIs currently exist in a league of their own. The

devices

themselves bring together a range of fields — implantable materials,

safety-critical software, the Internet of

Things and wearable medical devices, to

name a few — that are not yet standardized. There are no predicate

devices.

“These

are new

categories of devices,” Precision Neuroscience’s Mermel said. “So,

until

there’s a device that gets cleared for market by the FDA, it’s an open

question

of what type of evidence you have to show to demonstrate that the

benefit of a

device outweighs the risk.”

Cost and Reimbursement

If

brain-computer interfaces

make it to medical practice, who is going to pay for them? Or for the

procedures? And what about the health-check follow-ups, ongoing

maintenance and

upgrades that support the technology over time?

Determining

whether

the tab gets picked up by healthcare and insurance companies,

government

subsidies or patients out of pocket will greatly determine the devices’

level

of accessibility to the public and who, based on socioeconomic status,

are

qualified for a tech-augmented quality of life.

“It’s

important to

prioritize the needs and perspectives of end-users, especially the most

vulnerable, such as those with disabilities, and consider the potential

ethical

implications of these technologies,” Alcaide said. “We must ensure that

these

technologies do not perpetuate social biases or further exacerbate

existing

inequalities